Every agent needs a keeper · D&D-AI · 2026

AI builds it.

Mindling

proves it.

A mindling is what an AI agent becomes when a human is still accountable for the outcome.

AI made individual builders as productive as small teams. Nobody built the operating layer for what comes next — scope, context, proof, review, and trust. That layer is Mindling: the governance, memory, and evidence system that keeps humans in control of what agents build.

The tension

Developers are moving faster than they are moving confidently.

of developers use or plan to use AI tools daily Stack Overflow · 2025 Developer Survey

trust AI outputs to be accurate — same developers, same survey The gap between speed and confidence is the product

estimated build-ticket equivalents to reach Mindling's current state Backcast from app, issue lifecycle, routing, QA, exports, company model, and diligence package

"The opportunity is not 'AI writes code.' The opportunity is the operating layer that governs what AI writes — the mindling layer: memory, intent, nexus, delivery, lineage, integrity, notation, governance."

The moment

AI made individuals as productive as small teams.

No one built the operating layer for what comes next.

The wave has already landed. Stack Overflow confirms it: 51% of professional developers now use AI tools daily. McKinsey names agentic AI the defining enterprise shift of 2025. GitHub Copilot, Cursor, Claude Code — these are not experiments. They are how developers work.

But that same evidence reveals a structural problem: 84% adoption, 29% trust. Developers are moving faster than they are moving confidently. The bottleneck has shifted from writing code to knowing it is right — and no tool in the current market is built specifically for that gap.

The open question is not whether AI coding becomes normal. It already is. The open question is who will build the layer that makes AI-assisted work trustworthy, auditable, and safely closeable.

Why now

Adoption outpaced trust

51% daily AI usage. 29% trust rate. The discipline layer is behind the adoption curve — which means the timing is right, not early.

The gap

No dominant player in workflow governance

Copilot writes code. Linear tracks tasks. Nobody owns the operating discipline around AI-assisted delivery.

What Mindling means

Mind + ling

Mind — the AI agent. The intelligence building and executing.

-ling — Old English: one under the care of, one governed by. A fledgling hasn't flown alone yet. A mindling is a mind not yet trusted to ship without proof.

Together — the layer that turns raw AI output into a governed, traceable workflow a human can sign off on.

The builder

Investors don't fund products.

They fund people.

Builder · Mindling

Owner · All 15 departments

Duane is a solo builder who found himself in exactly the problem Mindling solves. Working with AI tools every day, he watched context get lost between chat and code, watched tasks get marked done that were not done, watched handoffs fail because agents had no structured scope to work from. He did not research the problem. He lived it.

Rather than ship faster and hope for the best, he built the discipline layer his own workflow was missing. The result is Mindling — a system he uses to build Mindling itself. Every issue that adds a feature to the product first passes through Mindling's own intake, routing, QA gates, and owner approval. The product is its own proof of concept.

The company model — 15 departments, 14 named agents, explicit permissions, evidence contracts — is not a pitch deck diagram. It is the live operating structure of the D&D-AI workspace, right now, on a local machine.

Built with the discipline it teaches

Mindling governs its own development. Every feature request enters as a structured issue. Engineering requires an approved scope and evidence gate. QA requires browser behavior and a reproducibility note. The owner — Duane — approves before close. The product is not a theory about what good AI-assisted workflow looks like. It is a record of what that workflow actually produces.

Why the name · three roots · one meaning

Swedish myndling — a legal ward under structured governance. One who is governed, not merely supervised.

Old English -ling — one who belongs under the care of something larger. Never fully autonomous.

English mind — the intelligence being governed. The agent. The thing that builds.

The problem

AI speed creates a new kind of debt.

Context debt.

Before AI, development was slow enough that discipline was built in by default. AI removed the friction — and that is both the gift and the trap.

"A mindling is not a tool. It is not an agent. It is what an agent becomes when a human is still responsible for the outcome."

Where context disappears

Work starts in chat. It moves into code. Then it scatters across local files, screenshots, test logs, and follow-up notes nobody can find three days later. The original intent — what was actually requested — is usually the first thing lost.

What breaks AI handoffs

AI agents need scope, file boundaries, acceptance criteria, expected evidence, and stop conditions. Feed them a vague prompt and they will build something. Just not necessarily the right thing. The output looks complete. The context was never there.

The phantom close

Tickets get marked done. Features ship. But done is a feeling, not a fact, unless someone has verified tests, confirmed browser behavior, documented the decisions, and explicitly approved the result. Most builders never reach that bar — not because they're careless, but because no tool makes it visible.

Who feels this most

Solo founders, indie developers, small teams moving fast. Exactly the people who have adopted AI tools most aggressively — and who have the least infrastructure to catch what slips through.

The solution

Mindling is the operating layer

AI-assisted builders don't have.

In plain language: Mindling turns a pile of chats, code changes, screenshots, and decisions into one visible path from idea to finished, verified, approved work. It treats every project like a compact software company — with planning, engineering, QA, risk review, and owner sign-off each having a real and enforced job.

Mindling does not write your code. It governs what your AI agents build, ensures the right context travels with each task, requires proof that work succeeded, and keeps the human in explicit control of what actually ships. Local-first. Your project data stays on your machine.

01 · Intake

Capture context

A goal becomes a structured issue: project, scope, module boundaries, and source gates defined before anything is handed to an agent.

02 · Scope

Define acceptance

Planning defines acceptance criteria, out-of-scope items, and stop conditions. An agent with a clear scope is exponentially more useful.

03 · Route

Choose the agent

Mindling recommends which agent or department handles the work, with full context, evidence expectations, and stop conditions pre-loaded.

04 · Build

Implement the slice

Engineering implements the approved scope and returns changed files, tests, and risks — nothing advances without the evidence package.

05 · Verify

Demand proof

QA checks browser behavior, screenshots, console errors, and reproducibility. Evidence gates enforce that work is provably complete.

06 · Review

Release risk check

Release risk reviews scope creep, external dependencies, and clearance status before the issue reaches the owner.

07 · Approve

Explicit owner sign-off

Owner approval is never implied. The system requires an explicit decision — accept, rework, or split. No phantom done states.

08 · Close

Document and close

Docs and wiki updates are part of the close gate. Memory is produced during delivery, not as an afterthought nobody writes.

Evidence

This is not a pitch deck for something

that might exist.

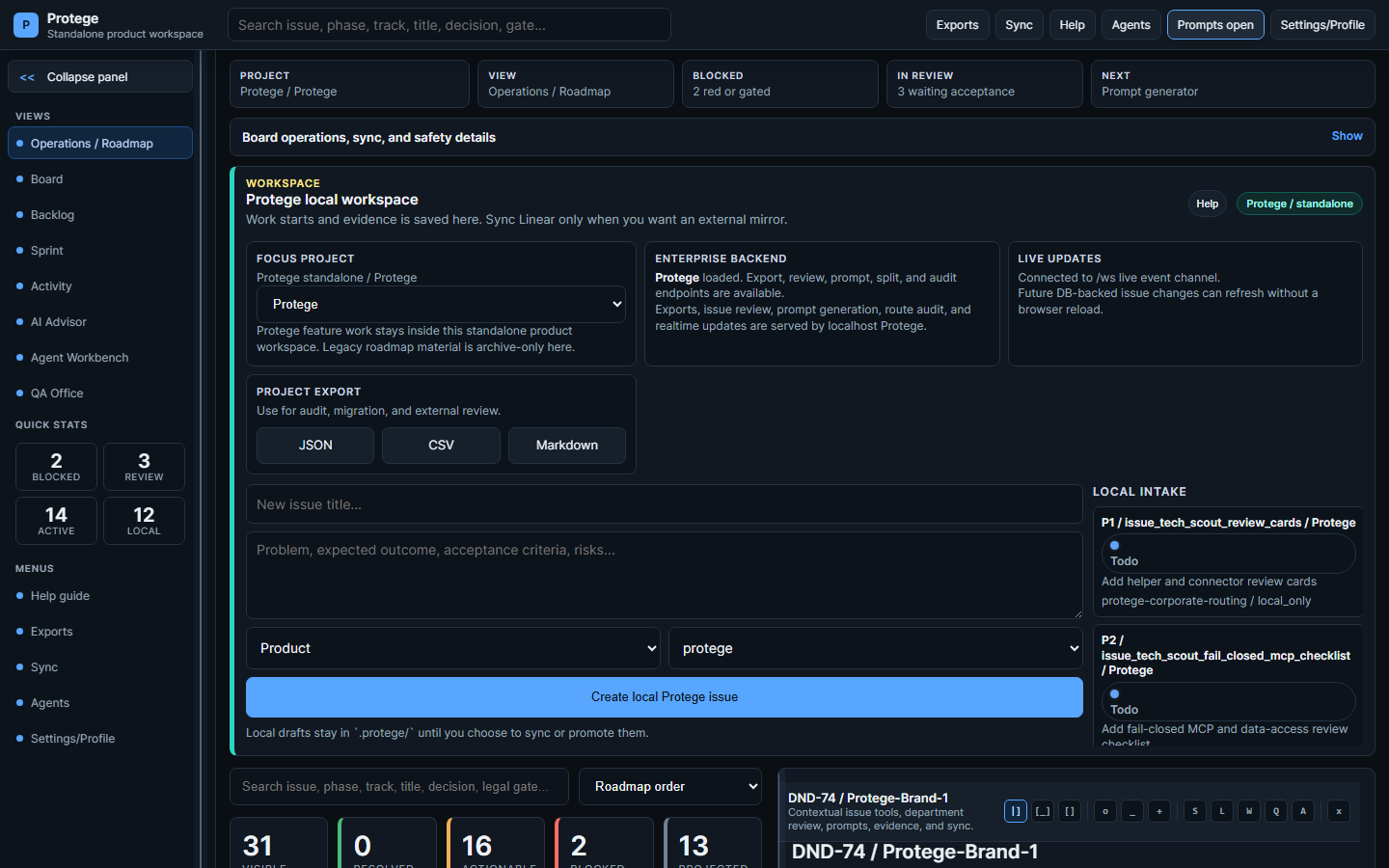

Early-stage products often ask you to bet on a vision. This one asks you to look at a working foundation. The core Mindling application runs on a local machine today — with real pages, automated verification checks, and real enforced boundaries.

Live application

Mindling runs as a local FastAPI app. The command board, routing advice, corporate registry, and focus router are real pages you can open in a browser — not wireframes or mockups.

108 verification checks

The latest verified Mindling product slice has 108 automated verification checks. Every investor claim in this document is tied to implementation evidence. Not projections. Not promises.

Company model

15 departments, 14 named agents, explicit permission levels, and evidence contracts for every department gate — modeled in a live corporate registry, not a planning document.

Explicit safety boundaries

No hidden installs. No silent mutation of Git state, Linear, or MCP configuration. Boundaries are encoded in the system — not described in a policy no one enforces.

Focus router

Work is classified by project, track, module, and product boundary before any agent touches it. Agents receive structured context, not vague prompts that produce scope drift.

Architecture versioned

Implemented slices in green, next planned slices in amber, blocked paths in red. The roadmap is honest about what exists and what does not.

The market

The coding tools market is already won.

The workflow layer around it is open.

Developer tools is a proven, large, and growing category. The opportunity Mindling addresses sits above the coding layer — the operating discipline around AI-assisted delivery — and that layer is essentially unclaimed.

Global professional developers The addressable individual market, growing as AI lowers the barrier for new builders and vibe coders

Daily AI tool users today Already mainstream. The workflow layer is behind the adoption curve — which means the timing is right, not early

Dominant players in AI workflow governance Copilot writes code. Linear tracks tasks. Nobody owns the operating discipline around AI-assisted delivery

Initial users

Solo founders, indie developers, vibe coders, small software teams, and project owners who want AI speed with visible control. People who already feel the context debt problem every working day.

Expansion path

AI-native agencies, product studios, internal innovation teams, and small engineering groups adopting AI coding tools and needing governance before they can confidently scale.

Competitive positioning

Existing tools are useful slices.

Mindling coordinates the whole path.

| Tool / Category | What it does well | What it cannot do | Mindling's edge |

|---|---|---|---|

| GitHub Copilot / Cursor | Autocomplete and AI code generation at point of typing | No scope, no context governance, no QA enforcement, no owner sign-off | Mindling wraps what the agent builds with intake, evidence gates, and approval |

| Linear / Jira | Issue tracking, status boards, team coordination | Not built for AI agents. No routing logic. No evidence contracts. No QA gates. | Mindling is designed around agent handoffs from the start, not bolted on |

| Notion / Obsidian | Documentation, notes, knowledge storage | Passive. No workflow enforcement. No delivery gates. No AI routing. | Mindling produces memory during delivery — docs are a close gate, not an afterthought |

| Claude Code / Codex | Agentic coding with file access and multi-step execution | No operating context, no scope boundaries, no QA verification, no human checkpoint | Mindling provides the operating layer that makes these agents trustworthy to deploy |

| Generic PM tools | Project status, roadmaps, team velocity | Built for human workflows. Cannot govern AI agent work or enforce evidence contracts. | Mindling is AI-workflow-native: built for the new way developers actually work |

Sovereign workspace

Enterprise-grade AI without the enterprise-grade data leak.

Local-first operating layer

Issue context, routing guidance, project memory, screenshots, and workflow evidence stay on the builder's machine — no mandatory cloud upload.

Hybrid AI path

Hosted tools when they are the best fit. Local models, local MCP servers, and project knowledge for sensitive work. The choice stays with the owner.

Knowledge graph access

Review work reads the project's wiki, manuals, source paths, and decision log — not chat memory that evaporates after the session ends.

Distribution boundaries

Public, private-share, and diligence-only artifacts separated before export so proof can travel without exposing sensitive internal context.

The architectural moat

Local-first with hybrid AI routing is not just a privacy feature — it is a structural advantage. As enterprises grow increasingly cautious about where AI workloads run, Mindling's architecture is already positioned correctly. The data governance decision is made at the design level, not retrofitted.

Venture readiness

Every department makes Mindling more investable.

Strategic Advisory

Turns market timing, founder-market fit, sequencing, and category choice into explicit decisions instead of vague optimism.

Sales & Investor Relations

Owns the investor narrative, objection handling, demo path, proof selection, and next action for sponsor and VC conversations.

Product Planning

Protects the wedge, audience, scope, acceptance criteria, and public/private/diligence boundaries before work expands.

Design & Brand

Makes the product feel fundable: coherent visual system, credible diagrams, polished artifacts, and presentation-quality proof.

Engineering + QA

Converts claims into running software, tests, browser evidence, PDF checks, and repeatable verification instead of hand-waved progress.

Docs + Release Risk

Prepares diligence memory, manuals, source-backed claims, privacy review, distribution lanes, and counsel-sensitive boundaries.

The operating system underneath is being shaped for venture diligence

The current ask remains a development sponsorship, not a securities offering. But clear thesis, visible product, evidence discipline, controlled risk, and a path from solo-founder proof to repeatable company motion — the infrastructure is being built now.

The immediate ask

90 days. $250 a month.

A bet on a builder who ships.

The immediate bottleneck is not a cloud bill or a team salary. It is reliable access to professional AI development capacity and the practical costs of a serious local development environment. This is not a securities offering — no equity, no SAFE, no return promises appear here.

Essential

$100

per month · $300 total

Core AI coding subscription so development does not stop. The minimum that keeps the build moving forward.

≈ a dinner out, once a month

Builder

$250

per month · $750 total

AI subscription plus practical operating costs: internet, power, storage, domain, tool margin, and an operating cushion. This is what makes the work sustainable and consistent.

≈ $8.33 a day · less than a developer's hourly rate

Strategic

$500

per month · $1,500 total

Faster demo polish, monthly sponsor reporting, accelerated investor-readiness packaging, and named acknowledgment in the launch materials.

Opens the formal investor conversation earlier

This is a proposed development sponsorship. It does not create ownership, repayment rights, profit participation, or investment rights unless separately documented with qualified counsel.

Public support is collected through the Mindling GoFundMe campaign. The first public milestone is $4,000 for the 90-day development push.

Open GoFundMe90-Day execution plan

What sponsorship unlocks in three months.

| Period | Focus | Key deliverable |

|---|---|---|

| Month 1 | Polish & Stabilize | Refine the investor package, capture public-safe demo evidence, stabilize the development budget, and define the first external-user onboarding path. |

| Month 2 | Deepen & Validate | Improve issue lifecycle surfaces, sharpen the product narrative, build sample project onboarding, and strengthen QA gates with documented evidence. |

| Month 3 | Prepare & Present | Deliver a structured sponsor update, launch a stronger beta path, and produce a counsel-ready investor conversation packet if the evidence warrants it. |

Decision point at day 90

You hold the options

Continue development sponsorship

Pause with a clean exit

Invite a strategic advisor

Begin a formal investment conversation with counsel-reviewed terms

No obligation beyond the sponsorship term. No hidden commitments. Transparent reporting throughout.

Expected investor questions

The questions are answered before the meeting.

| Question | Answer |

|---|---|

| What exists today? | A running local app with work intake, focus guidance, workflow pages, evidence capture, package export, PDF export, and repeatable verification. 108 automated verification checks. |

| Who feels this first? | Builders already using AI coding tools who spend too much time reconstructing context, checking work, collecting proof, and deciding whether output is safe to move forward. |

| Why not Linear, Jira, Notion, or Cursor alone? | Those tools are useful slices. Mindling coordinates across them: work context, AI routing, evidence contract, quality review, risk review, docs memory, and explicit owner approval. |

| Why can this work? | Built from actual daily pain of AI-assisted delivery, with local-first workflow memory and review checkpoints instead of generic PM language applied to a new problem. |

| What does 90 days prove? | Whether Mindling can move from internal proof to repeatable demo, first external-user onboarding, stronger beta path, and a credible next funding or advisory conversation. |

| What is still unproven? | External users, willingness to pay, final pricing, support burden, and which channel converts first. The sprint is designed to turn those unknowns into evidence. |

Risk and mitigation

The risks are named.

The path still points forward.

Solo-founder execution

Mitigate with small milestones, visible proof, sponsor updates, and advisor checkpoints. The 90-day structure is designed for exactly this.

Market education

Start with AI-assisted builders who already feel the workflow pain instead of educating cold enterprise buyers. The early adopter is warm and identifiable.

AI pricing changes

Stay connector-agnostic so Mindling routes work across Codex, Claude Code, local models, and future tools as the AI landscape evolves.

Scope creep

Keep Mindling focused on the operating path: scope, route, verify, approve, document, and close. Mindling's own governance enforces its own discipline.

Legal terms

Keep sponsorship separate from investment rights unless counsel documents a formal agreement. This boundary is explicit throughout the package.

Product polish

Use go-to-market, design, quality, and risk review before artifacts leave the local workspace. The system enforces what the process requires.

Private contact · next action

Back the operating layer

while it is still early.

If you have ever built something and wondered whether it was truly done — this is the product.

Mindling is named after what an AI agent becomes when a human is still accountable for what it produces. Not fully autonomous. Not fully manual. Governed, traceable, and proven before it ships.

The word comes from three places at once: the Swedish myndling — a legal ward under structured governance. The Old English -ling — one who belongs under the care of something larger. And the English mind — the intelligence being governed. Three languages. One product thesis.

Recommended first step: a 30-minute private briefing. Live product, live evidence, live 90-day plan. No pitch deck theater.

"Every agent needs a keeper. Mindling is the keeper."

Diligence package

Private detail on request

Architecture docs, planning files, routing logic, and detailed workflow evidence available to serious sponsors under a private review agreement.

Privacy note

All conversations are private. Contact details are for sponsorship discussion only. Do not redistribute without a Release Risk review.